(guest post by Jinzhao Wang)

Hawking famously showed that black holes radiate just like a blackbody. Behaving like a thermodynamic object, a black hole has an entropy worth a quarter of its area (in units of the Planck area), which is now known as the Bekenstein-Hawking (BH) entropy. Through several thought experiments, Bekenstein already reached this conclusion up to the 1/4 prefactor before Hawking’s calculation, and he also reasoned that a black hole is the most entropic object in the universe in the sense that one cannot pack entropy more efficiently in a region bounded by the same area with the same mass than a black hole does. This is known as the Bekenstein bound. As for Hawking, the BH entropy can be deduced from the gravity partition function computed using the gravitational path integral (GPI), just like how entropy is derived from the partition function in statistical physics.

However, Hawking’s discovery led to a new problem that put the fundamental principle of physics in question, that the information carried by a closed system cannot be destroyed under evolution. This cherished principle is respected by the unitarity in quantum theory. On the other hand, since the radiation has a relatively featureless thermal spectrum, it cannot preserve all the information a star contains before it collapses into a black hole nor the information carried by the objects that later fall into it. If the radiation is all there is after the complete evaporation, the information is apparently lost. If we are not willing to give up our well-established theories, one way out is to speculate that the black hole never really evaporates away but it somehow stops evaporating and becomes a long-living remnant when it has shrunk to the Planckian size. All the entropy production is then due to the correlation with the remaining black hole. While this could be plausible, there is already tension long before the black hole approaches its end life. If we examine the radiation entropy after the black hole passes its half-life, the radiation entropy keeps rising according to Hawking and they have to be attributed to the correlation with the remaining black hole. This means that the mid-aged black hole has to be as entropic as the radiation but this is impossible without violating the Bekenstein bound. In fact, Page famously argued that if we suppose a black hole indeed operates with some unknown unitary evolution, then typically the radiation entropy should start to go down at its half-life, in contrast to Hawking’s calculation. We refer to this tension past the Page time as the entropic information puzzle. The challenge is to derive the entropy curve that Page predicted, i.e. the Page curve, using a first-principle gravity calculation.

Recently, significant progress (see here and here ) has been made to resolve the entropic information puzzle. (cf. this review article and the references therein.) The entropy of radiation is calculated in semiclassical gravity with GPI à la Hawking, and the Page curve is derived. Remarkably and unexpectedly, the Page curve can be obtained in the semiclassical regime without postulating radical new physics. The new ingredient is the replica trick, which essentially probes the radiation spectrum with many copies of the black hole. The idea is that we’d like to compute all the moments of the radiation density matrix , where we rewrite it as the expectation value of the n-fold swap operator

on n identical and independent replicas of the state

. The trouble is we don’t know explicitly what

is, rather our current understanding of quantum gravity only allows us to describe the moments we’d like to compute implicitly in terms of a n-replica partition function with appropriately chosen boundary conditions.

where the LHS is what we really compute in gravity and we postulate on the RHS that this partition function gives the moments of that we want.

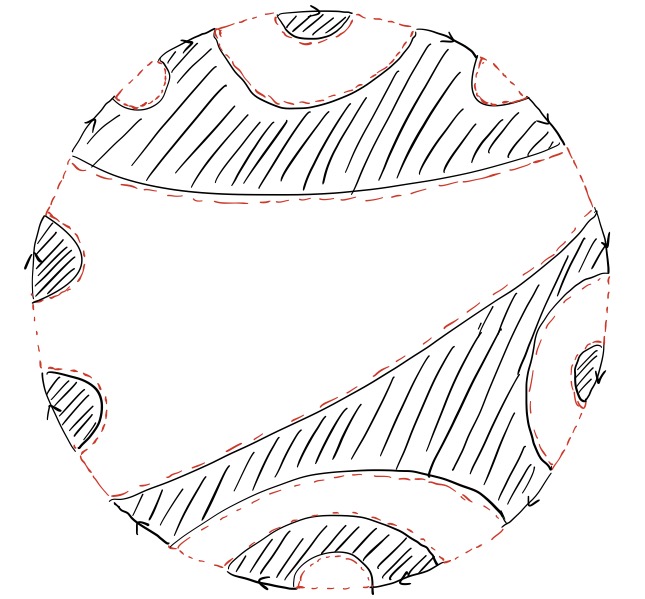

To evaluate , the GPI sums over all legit configurations, such as all sorts of metrics, topologies, and matter fields, consistent with the given boundary conditions. In particular, new geometric configurations show up and modify Hawking’s result. These geometries connect different replicas and are called replica wormholes. Since Hawking only ever considered a single black hole scenario, he missed these wormhole contributions in his calculation. In practice, performing the GPI over all the wormhole configurations can be technically difficult and one needs to resort to some simplifications and approximations. For the entropy calculation, one often drops all the wormholes but the maximally symmetric one that connects all the replicas. This approximation leads to a handy formula, called the island formula, for computing the radiation entropy and thus the Page curve. However, we should keep in mind that sometimes this approximation can be bad and the island formula needs a large correction. It would be interesting to see when and how this happens.

Fortunately, there is a toy model of an evaporating black hole due to Penington-Shenker-Stanford-Yang (PSSY), in which one can resolve the spectrum of the radiation density matrix without compromise. This model simplifies the technical setup as much as possible while still keeping the essence of the entropic information puzzle. This recent paper computes the radiation entropy by implementing the full GPI and identifies the large corrections to the commonly used island formula. Interestingly, the key ingredient is free probability. The GPI becomes tractable after being translated into the free probabilistic language. Here we summarise the main ideas. In the replica trick GPI, the wormholes are organized by the non-crossing partitions. Feynman taught us to sum over all contributions weighted by the exponential of the gravity action evaluated on the wormholes (wormhole contributions). Then the resulting n-replica partition function (i.e. nth moment of ), is equal to summing over the wormhole contributions, matching exactly with the free moment-cumulant relation. Therefore, the wormhole contributions shall be treated as free cumulants. Furthermore, the matter field propagating on a particular wormhole configuration (labeled by

) is organized by the Kreweras complement of

. Together the total contribution to the n-replica partition function from both the wormholes and the matter field on them amounts to a free multiplicative convolution. It is a convolution between two implicit probability distributions encoding the quantum information from the gravity sector and the matter sector. With this observation, one can then evaluate the free multiplicative convolution using tools from free harmonic analysis to resolve the radiation spectrum and thus the Page curve.

Through modeling the free random variables in random matrices, we can go one step further and deduce from the convolution that the radiation spectrum matches the one obtained from a generalized version of Page’s model. Therefore, we really start from the first-principle gravity calculation to address the challenge that Page posed, and free probability helps to make this connection clear and precise in the context of the PSSY model. What remains to be understood is why freeness is relevant here in the first place. To what extent is free probability useful in quantum gravity and is there a natural reason for freeness to emerge? Free probability already has applications in quantum many-body physics (cf. the previous post). If we think of quantum gravity as a quantum many-body problem, some aspects of it can be understood in terms of random tensor networks. This viewpoint has been very successful in the context of the AdS/CFT correspondence. In this view, freeness can plausibly be ubiquitous in gravity thanks to the random tensors. Another hint comes from concrete quantum mechanic models such as the SYK model, from which simple gravity theory can emerge in the low energy regime. The earlier work of Pluma and Speicher drew the connection between the double-scaled SYK model and the q-Brownian motion. Perhaps quantum gravity is calling for new species of non-commutative probability theories that differ from the usual quantum theory.

There is a subtle logical inconsistency that we should address. The postulate above is not exactly correct. The free convolution indicates that the radiation spectrum obtained is generically continuous, suggesting that we are dealing with a random radiation density matrix. Hence, in hindsight, it’s more appropriate to say that the GPI is computing the expected n-th moment

. However, it is puzzling because this extra ensemble average

radically violates the usual Born’s rule in quantum physics.

In fact, we can give a proper physical explanation within the standard quantum theory. This was pointed out in this other recent paper, leveraging the power of the quantum de Finetti theorem. The key is to make a weaker postulate that the implicit state upon which we evaluate should be correlated instead of independent among the n replicas. Let’s denote this joint radiation state as

, which may not be a product state

as postulated above. This is because in the GPI

, one only imposes the boundary conditions, so gravity could automatically correlate the state in the bulk even if one means to prepare them independently. It’s hence too strong to postulate that the joint radiation state implicitly defined via the boundary conditions has the product form

. Nonetheless, this joint state

should still be permutation-invariant because we don’t distinguish the replicas. Even better, it should also be exchangeable (meaning that the quantum state can be treated as a marginal of a larger permutation-invariant state) because we can in principle consider an infinite amount of replicas and randomly sample n replicas to evaluate

. This allows us to invoke the de Finetti theorem to deduce that the joint radiation state on n-replicas is a convex combination over identical and independent replica states

with some probability measure

.

.

The de Finetti theorem thus naturally brings in an ensemble average that is consistent with the result of the free convolution calculation. Interestingly, one can further show that the de Finetti theorem implies that the replica trick really computes the regularized entropy

, i.e. the averaged radiation entropy of infinitely many replica black holes. It is to be contrasted with the radiation entropy of a single black hole, which can be much bigger because of the uncertainty in the measure

. The latter is closer to what Hawking calculated and he was wrong because the entropy contribution due to probability measure

is not what we are after. This ensemble reflects that our theory of quantum gravity is incomplete to correctly pin down the exact description of the radiation, but we really shouldn’t attribute this uncertainty to the physical entropy of radiation. The gist of the replica trick is that with many copies the contribution from

is moderated out in the regularized entropy because it doesn’t scale with the number of replicas. Therefore, when someone actually goes out and operationally measures the radiation entropy, she has to prepare many copies of the black hole and sample them to deduce the measurement statistics just like for measuring any quantum observable. Then she will find herself dealing with a de Finetti state, where

acts like a Bayesian prior that reflects our ignorance of the fundamental theory. Nonetheless, the measurement shall reveal the truth and help update the prior to peak at some particular

. Hence, operationally the entropy measured should never depend on how uncertain the prior is. This is perhaps a better explanation of what Hawking did wrong. These conceptual issues are now clarified thanks to the wisdom of de Finetti.